1-DAV-202 Data Management 2023/24

Previously 2-INF-185 Data Source Integration

Difference between revisions of "Lweb"

| Line 90: | Line 90: | ||

datetime.datetime(2018, 10, 9, 13, 55, 26) | datetime.datetime(2018, 10, 9, 13, 55, 26) | ||

</syntaxhighlight> | </syntaxhighlight> | ||

| − | * <tt>[https://dateutil.readthedocs.org/en/latest/parser.html dateutil]</tt> package. | + | * <tt>[https://dateutil.readthedocs.org/en/latest/parser.html dateutil]</tt> package. Beware, that default setting prefer "month.day.year" format. This can be fixed with "dayfirst" flag. |

<syntaxhighlight lang="Python"> | <syntaxhighlight lang="Python"> | ||

>>> import dateutil.parser | >>> import dateutil.parser | ||

Revision as of 10:27, 13 March 2023

It is 2021. Use python3! The default `python` command on vyuka server is python 2.7. Some of the packages do not work with python2. If you type `python3` you will get python3.

Sometimes you may be interested in processing data which is available in the form of a website consisting of multiple webpages (for example an e-shop with one page per item or a discussion forum with pages of individual users and individual discussion topics).

In this lecture, we will extract information from such a website using Python and existing Python libraries. We will store the results in an SQLite database. These results will be analyzed further in the following lectures.

Scraping webpages

In Python, the simplest tool for downloading webpages is requests package:

import requests

r = requests.get("http://en.wikipedia.org")

print(r.text[:10])

Parsing webpages

When you download one page from a website, it is in HTML format and you need to extract useful information from it. We will use beautifulsoup4 library for parsing HTML.

Parsing a webpage:

import requests

from bs4 import BeautifulSoup

text = requests.get("http://en.wikipedia.org").text

parsed = BeautifulSoup(text, 'html.parser')

Parsed variable contains HTML tree.

Select all nodes with tag <a>:

>>> links = parsed.select('a')

>>> links[1]

<a class="mw-jump-link" href="#mw-head">Jump to navigation</a>

Getting inner text:

>>> links[1].string

'Jump to navigation'

Selecting

>>> li = parsed.select('li')

>>> li[10]

<li><a href="/wiki/Fearless_(Taylor_Swift_album)" title="Fearless (Taylor Swift album)"><i>Fearless</i> (Taylor Swift album)</a></li>

>>> for ch in li[10].children:

... print(ch.name)

...

a

>>> ch.string

>>> ch.text

'Fearless (Taylor Swift album)'

More complicated selection:

parsed.select('li i') # tag inside a tag (not direct descendant), returns inner tag

parsed.select('li > i') # tag inside a tag (direct descendant), returns inner tag

parsed.select('li > i')[0].parent # gets the parent tag

parsed.select('.interlanguage-link-target') # select anything with class = "..." atribute

parsed.select('a.interlanguage-link-target') # select <a> tag with class = "..." atribute

parsed.select('li .interlanguage-link-target') # select <li> tag followed by anything with class = "..." atribute

parsed.select('#top') # select anything with id="top"

- In your code, we recommend following the examples at the beginning of the documentation and the example of CSS selectors. Also you can check out general syntax of CSS selectors.

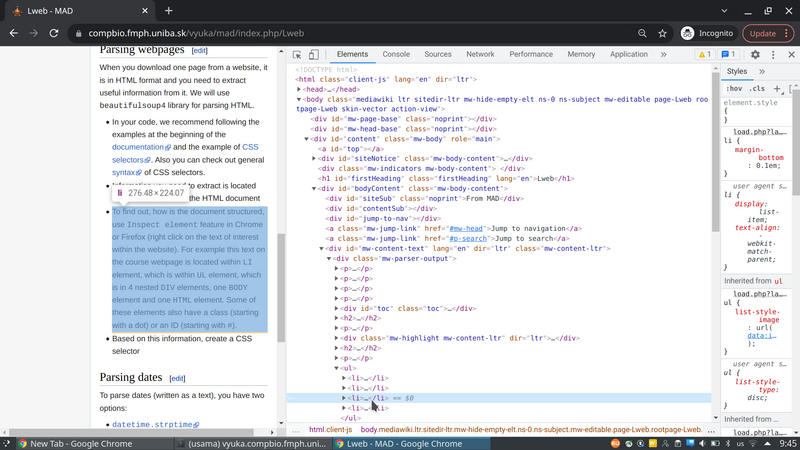

- Information you need to extract is located within the structure of the HTML document

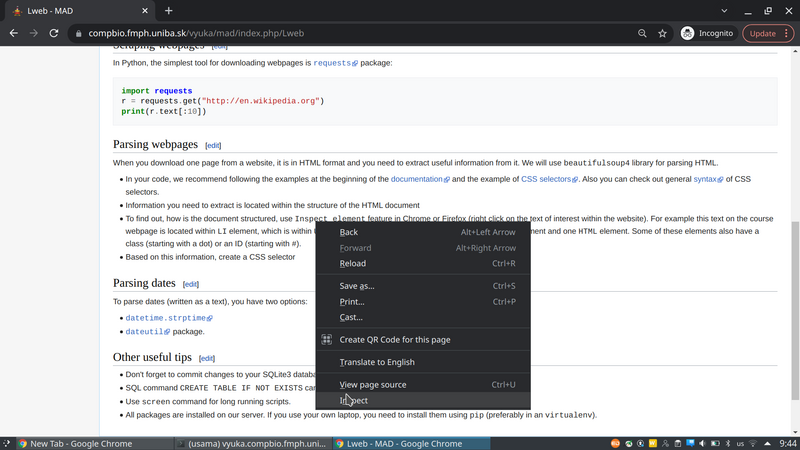

- To find out, how is the document structured, use Inspect element feature in Chrome or Firefox (right click on the text of interest within the website). For example this text on the course webpage is located within LI element, which is within UL element, which is in 4 nested DIV elements, one BODY element and one HTML element. Some of these elements also have a class (starting with a dot) or an ID (starting with #).

- Based on this information, create a CSS selector and then extract relevant text from selected nodes.

Parsing dates

To parse dates (written as a text), you have two options:

>>> import datetime

>>> datetime_str = '9.10.18 13:55:26'

>>> datetime.datetime.strptime(datetime_str, '%d.%m.%y %H:%M:%S')

datetime.datetime(2018, 10, 9, 13, 55, 26)

- dateutil package. Beware, that default setting prefer "month.day.year" format. This can be fixed with "dayfirst" flag.

>>> import dateutil.parser

>>> dateutil.parser.parse('2012-01-02 15:20:30')

datetime.datetime(2012, 1, 2, 15, 20, 30)

>>> dateutil.parser.parse('02.01.2012 15:20:30')

datetime.datetime(2012, 2, 1, 15, 20, 30)

>>> dateutil.parser.parse('02.01.2012 15:20:30', dayfirst=True)

datetime.datetime(2012, 1, 2, 15, 20, 30)

Other useful tips

- Don't forget to commit changes to your SQLite3 database (call db.commit()).

- SQL command CREATE TABLE IF NOT EXISTS can be useful at the start of your script.

- Use screen command for long running scripts.

- All packages are installed on our server. If you use your own laptop, you need to install them using pip (preferably in an virtualenv).