1-DAV-202 Data Management 2023/24

Previously 2-INF-185 Data Source Integration

Lmake

Contents

Job Scheduling

- Some computing jobs take a lot of time: hours, days, weeks,...

- We do not want to keep a command-line window open the whole time; therefore we run such jobs in the background

- Simple commands to do it in Linux:

- Now we will concentrate on Sun Grid Engine, a complex software for managing many jobs from many users on a cluster consisting of multiple computers

- Basic workflow:

- Submit a job (command) to a queue

- The job waits in the queue until resources (memory, CPUs, etc.) become available on some computer

- The job runs on the computer

- Output of the job is stored in files

- User can monitor the status of the job (waiting, running)

- Complex possibilities for assigning priorities and deadlines to jobs, managing multiple queues etc.

- Ideally all computers in the cluster share the same environment and filesystem

- We have a simple training cluster for this exercise:

- You submit jobs to queue on vyuka

- They will run on computer cpu02

- This cluster is only temporarily available until next Thursday

Submitting a job (qsub)

Basic commad: qsub -b y -cwd 'command < input > output 2> error'

- quoting around command allows us to include special characters, such as <, > etc. and not to apply it to qsub command itself

- -b y treats command as binary, usually preferable for both binary programs and scripts

- -cwd executes command in the current directory

- -N name allows to set name of the job

- -l resource=value requests some non-default resources

- for example, we can use -l threads=2 to request 2 threads for parallel programs

- Grid engine will not check if you do not use more CPUs or memory than requested, be considerate (and perhaps occasionally watch your jobs by running top at the computer where they execute)

- qsub will create files for stdout and stderr, e.g. s2.o27 and s2.e27 for the job with name s2 and jobid 27

Monitoring and deleting jobs (qstat, qdel)

Command qstat displays jobs of the current user

- job 28 is running of server cpu02 (status <t>r), job 29 is waiting in queue (status qw)

job-ID prior name user state submit/start at queue

------------------------------------------------------------------------------

28 0.50000 s3 bbrejova r 03/15/2016 22:12:18 main.q@cpu02

29 0.00000 s3 bbrejova qw 03/15/2016 22:14:08

- Command qstat -u '*' displays jobs of all users

- Finished jobs disappear from the list

- Command qstat -F threads shows how many threads available

queuename qtype resv/used/tot. load_avg arch states

---------------------------------------------------------------------------------

main.q@cpu02.compbio.fmph.unib BIP 0/2/8 0.03 lx26-amd64

hc:threads=0

28 0.75000 s3 bbrejova r 03/15/2016 22:12:18 1

29 0.25000 s3 bbrejova r 03/15/2016 22:14:18 1

- Command qdel deletes a job (waiting or running)

Interactive work on the cluster (qrsh), screen

Command qrsh creates a job which is a normal interactive shell running on the cluster

- In this shell you can manually run commands

- When you close the shell, the job finishes

- therefore it is a good idea to run qrsh within screen

- run screen command, this creates a new shell

- within this shell, run qrsh, then whatever commands

- by pressing Ctrl-a d you "detach" the screen, so that both shells (local and qrsh) continue running but you can close your local window

- later by running screen -r you get back to your shells

Running many small jobs

For example, we many need run some computation for each human gene (there are roughly 20,000 such genes). Here are some possibilties:

- Run a script which iterates through all jobs and runs them sequentially

- Problems: Does not use parallelism, needs more programming to restart after some interruption

- Submit processing of each gene as a separate job to cluster (submitting done by a script/one-liner)

- Jobs can run in parallel on many different computers

- Problem: Queue gets very long, hard to monitor progress, hard to resubmit only unfinished jobs after some failure.

- Array jobs in qsub (option -t): runs jobs numbered 1,2,3...; number of the current job is in an environment variable, used by the script to decide which gene to process

- Queue contains only running sub-jobs plus one line for the remaining part of the array job.

- After failure, you can resubmit only unfinished portion of the interval (e.g. start from job 173).

- Next: using make in which you specify how to process each gene and submit a single make command to the queue

- Make can execute multiple tasks in parallel using several threads on the same computer (qsub array jobs can run tasks on multiple computers)

- It will automatically skip tasks which are already finished, so restart os easy

Make

Make is a system for automatically building programs (running compiler, linker etc)

- In particular, we will use GNU make

- Rules for compilation are written in a Makefile

- Rather complex syntax with many features, we will only cover basics

Rules

- The main part of a Makefile are rules specifying how to generate target files from some source files (prerequisites).

- For example the following rule generates file target.txt by concatenating files source1.txt and source2.txt:

target.txt : source1.txt source2.txt

cat source1.txt source2.txt > target.txt

- The first line describes target and prerequisites, starts in the first column

- The following lines list commands to execute to create the target

- Each line with a command starts with a tab character

- If we have a directory with this rule in file called Makefile and files source1.txt and source2.txt, running make target.txt will run the cat command

- However, if target.txt already exists, the command will be run only if one of the prerequisites has more recent modification time than the target

- This allows to restart interrupted computations or rerun necessary parts after modification of some input files

- make automatically chains the rules as necessary:

- if we run make target.txt and some prerequisite does not exist, make checks if it can be created by some other rule and runs that rule first

- In general it first finds all necessary steps and runs them in appropriate order so that each rules has its prerequisites ready

- Option make -n target will show which commands would be executed to build target (dry run) - good idea before running something potentially dangerous

Pattern rules

We can specify a general rule for files with a systematic naming scheme. For example, to create a .pdf file from a .tex file, we use the pdflatex command:

%.pdf : %.tex

pdflatex $^

- In the first line, % denotes some variable part of the filename, which has to agree in the target and all prerequisites

- In commands, we can use several variables:

- Variable $^ contains the names of the prerequisites (source)

- Variable $@ contains the name of the target

- Variable $* contains the string matched by %

Other useful tricks in Makefiles

Variables

Store some reusable values in variables, then use them several times in the Makefile:

MYPATH := /projects/trees/bin

target : source

$(MYPATH)/script < $^ > $@

Wildcards, creating a list of targets from files in the directory

The following Makefile automatically creates .png version of each .eps file simply by running make:

EPS := $(wildcard *.eps)

EPSPNG := $(patsubst %.eps,%.png,$(EPS))

all: $(EPSPNG)

clean:

rm $(EPSPNG)

%.png : %.eps

convert -density 250 $^ $@

- variable EPS contains names of all files matching *.eps

- variable EPSPNG contains desirable names of .png files

- it is created by taking filenames in EPS and changing .eps to .png

- all is a "phony target" which is not really created

- its rule has no commands but all .png files are prerequisites, so are done first

- the first target in a Makefile (in this case all) is default when no other target is specified on the command-line

- clean is also a phony target for deleting generated .png files

Useful special built-in target names

Include these lines in your Makefile if desired

.SECONDARY:

# prevents deletion of intermediate targets in chained rules

.DELETE_ON_ERROR:

# delete targets if a rule fails

Parallel make

Running make with option -j 4 will run up to 4 commands in parallel if their dependencies are already finished. Ths allows easy parallelization on a single computer.

Alternatives to Makefiles

- Bioinformaticians often uses "pipelines" - sequences of commands run one after another, e.g. by a script or make

- There are many tools developed for automating computational pipelines, see e.g. this review: Jeremy Leipzig; A review of bioinformatic pipeline frameworks. Brief Bioinform 2016.

- For example Snakemake

- Snake workflows can contain shell commands or Python code

- Big advantage compared to make: pattern rules may contain multiple variable portions (in make only one % per filename)

- For example, assume we have several FASTA files and several profiles (HMMs) representing protein families and we want to run each profile on each FASTA file:

rule HMMER:

input: "{filename}.fasta", "{profile}.hmm"

output: "{filename}_{profile}.hmmer"

shell: "hmmsearch --domE 1e-5 --noali --domtblout {output} {input[1]} {input[0]}"

HWmake

See also the lecture

Motivation: Building Phylogenetic Trees

The task for today will be to build a phylogenetic tree of 9 mammalian species using protein sequences

- A phylogenetic tree is a tree showing evolutionary history of these species. Leaves are the present-day species, internal nodes are their common ancestors.

- The input contains sequences of all proteins from each species (we will use only a smaller subset)

- The process is typically split into severla stages shown below

Identify ortholog groups

Orthologs are proteins from different species that "correspond" to each other. Ortholog are found based on sequence similarity and we can use a tool called blast to identify sequence similarities between pairs of proteins. The result of ortholog group identification will be a set of groups, each group having one sequence from each of the 9 species

Align proteins on each group

For each ortholog group, we need to align proteins in the group to identify corresponding parts of the proteins. This is done by a tool called muscle

Unaligned sequences (start of protein O60568):

>human MTSSGPGPRFLLLLPLLLPPAASASDRPRGRDPVNPEKLLVITVA... >baboon MTSSRPGLRLLLLLLLLPPAASASDRPRGRDPVNPEKLLVMTVA... >dog MASSGPGLRLLLGLLLLLPPPPATSASDRPRGGDPVNPEKLLVITVA... >elephant MASWGPGARLLLLLLLLLLPPPPATSASDRSRGSDRVNPERLLVITVA... >guineapig MAFGAWLLLLPLLLLPPPPGACASDQPRGSNPVNPEKLLVITVA... >opossum SDKLLVITAA... >pig AMASGPGLRLLLLPLLVLSPPPAASASDRPRGSDPVNPDKLLVITVA... >rabbit MGCDSRKPLLLLPLLPLALVLQPWSARGRASAEEPSSISPDKLLVITVA... >rat MAASVPEPRLLLLLLLLLPPLPPVTSASDRPRGANPVNPDKLLVITVA...

Aligned sequences:

rabbit MGCDSRKPLL LLPLLPLALV LQPW-SARGR ASAEEPSSIS PDKLLVITVA ... guineapig MAFGA----W LLLLPLLLLP PPPGACASDQ PRGSNP--VN PEKLLVITVA ... opossum ---------- ---------- ---------- ---------- SDKLLVITAA ... rat MAASVPEPRL LLLLLLLLPP LPPVTSASDR PRGANP--VN PDKLLVITVA ... elephant MASWGPGARL LLLLLLLLLP PPPATSASDR SRGSDR--VN PERLLVITVA ... human MTSSGPGPRF LLLLPLLL-- -PPAASASDR PRGRDP--VN PEKLLVITVA ... baboon MTSSRPGLRL LLLLLLL--- -PPAASASDR PRGRDP--VN PEKLLVMTVA ... dog MASSGPGLRL LLGLLLLL-P PPPATSASDR PRGGDP--VN PEKLLVITVA ... pig AMASGPGLR- LLLLPLLVLS PPPAASASDR PRGSDP--VN PDKLLVITVA ...

Build phylogenetic tree for each grup

For each alignment, we build a phylogenetic tree for this group. We will use a program called phyml.

Example of a phylogenetic tree in newick format:

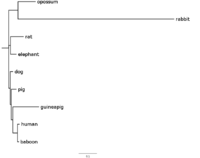

((opossum:0.09636245,rabbit:0.85794020):0.05219782, (rat:0.07263127,elephant:0.03306863):0.01043531, (dog:0.01700528,(pig:0.02891345, (guineapig:0.14451043, (human:0.01169266,baboon:0.00827402):0.02619598 ):0.00816185):0.00631423):0.00800806);

Build a consensus tree

The result of the previous step will be several trees, one for every group. Ideally, all trees would be identical, showing the real evolutionary history of the 9 species. But it is not easy to infer the real tree from sequence data, so the trees from different groups might differ. Therefore, in the last step, we will build a consensus tree. This can be done by using a tool called Phylip. The output is a single consensus tree.

Files and submitting

Our goal is to build a pipeline that automates the whole task using make and execute it remotely using qsub. Most of the work is already done, only small modifications are necessary.

- Submit by copying requested files to /submit/make/username/

- Do not forget to submit protocol, outline of the protocol is in /tasks/make/protocol.txt

Start by copying directory /tasks/make to your user directory

cp -ipr /tasks/make .

cd make

The directory contains three subdirectories:

- large: a larger sample of proteins for task A

- tiny: a very small set of proteins for task B

- small: a slightly larger set of proteins for task C

Task A (long job)

- In this task, you will run a long alignment job (more than two hours)

- Use directory large with files:

- ref.fa: selected human proteins

- other.fa: selected proteins from 8 other mammalian species

- Makefile: runs blast on ref.fa vs other.fa (also formats database other.fa before that)

- run make -n to see what commands will be done (you should see makeblastdb, blastp, and echo for timing)

- copy the output to the protocol

- run qsub with appropriate options to run make (at least -cwd -b y)

- then run qstat > queue.txt

- Submit file queue.txt showing your job waiting or running

- When your job finishes, check the following files:

- the output file ref.blast

- standard output from the qsub job, which is stored in a file named e.g. make.oX where X is the number of your job. The output shows the time when your job started and finished (this information was written by commands echo in the Makefile)

- Submit the last 100 lines from ref.blast under the name ref-end.blast (use tool tail -n 100) and the file make.oX mentioned above

Task B (finishing Makefile)

- In this task, you will finish a Makefile for splitting blast results into ortholog groups and building phylogenetic trees for each group

- This Makefile works with much smaller files and so you can run it quickly many times without qsub

- Work in directory tiny

- ref.fa: 2 human proteins

- other.fa: a selected subset of proteins from 8 other mammalian species

- Makefile: a longer makefile

- brm.pl: a Perl script for finding ortholog groups and sorting them to directories

The Makefile runs the analysis in four stages. Stages 1,2 and 4 are done, you have to finish stage 3

- If you run make without argument, it will attempt to run all 4 stages, but stage 3 will not run, because it is missing

- Stage 1: run as make ref.brm

- It runs blast as in task A, then splits proteins into ortholog groups and creates one directory for each group with file prot.fa containing protein sequences

- Stage 2: run as make alignments

- In each directory with an ortholog group, it will create an alignment prot.phy and link it under names lg.phy and wag.phy

- Stage 3: run as make trees (needs to be written by you)

- In each directory with an ortholog group, it should create files lg.phy_phyml_tree and wag.phy_phyml_tree containing the results of the phyml program run with two different evolutionary models WAG and LG, where LG is the default

- Run phyml by commands of the form:

phyml -i INPUT --datatype aa --bootstrap 0 --no_memory_check >LOG

phyml -i INPUT --model WAG --datatype aa --bootstrap 0 --no_memory_check >LOG - Change INPUT and LOG in the commands to the appropriate filenames using make variables $@, $^, $* etc. The input should come from lg.phy or wag.phy in the directory of a gene and log should be the same as tree name with extension .log added (e.g. lg.phy_phyml_tree.log)

- Also add variables LG_TREES and WAG_TREES listing filenames of all desirable trees and uncomment phony target trees which uses these variables

- Stage 4: run as make consensus

- Output trees from stage 3 are concatenated for each model separately to files lg/intree, wag/intree and then phylip is run to produce consensus trees lg.tree and wag.tree

- This stage also needs variables LG_TREES and WAG_TREES to be defined by you.

- Run your Makefile and check that the files lg.tree and wag.tree are produced

- Submit the whole directory tiny, including Makefile and all gene directories with tree files.

Task C (running make)

- Copy your Makefile from part B to directory small, which contains 9 human proteins and run make on this slightly larger set

- Again, run it without qsub, but it will take some time, particularly if the server is busy

- Look at the two resulting trees (wag.tree, lg.tree) using the figtree program

- it is available on vyuka, but you can also install it on your computer if needed

- In figtree, change the position of the root in the tree to make the opossum the outgroup (species branching as the first away from the others). This is done by clicking on opossum and thus selecting it, then pressing the Reroot button.

- Also switch on displaying branch labels. These labels show for each branch of the tree, how many of the input trees support this branch. To do this, use the left panel with options.

- Export the trees in pdf format as wag.tree.pdf and lg.tree.pdf

- Compare the two trees

- Note that the two children of each internal node are equivalent, so their placement higher or lower in the figure does not matter.

- Do the two trees differ? What is the highest and lowest support for a branch in each tree?

- Also compare your trees with the accepted "correct tree" found here http://genome-euro.ucsc.edu/images/phylo/hg38_100way.png (note that this tree contains many more species, but all ours are included)

- Write your observations to the protocol

- Submit the entire small directory (including the two pdf files)

Further possibilities

Here are some possibilities for further experiments, in case you are interested (do not submit these):

- You could copy your extended Makefile to directory large and create trees for all ortholog groups in the big set

- This would take a long time, so submit it through qsub and only some time after the lecture is over to allow classmates to work on task A

- After ref.brm si done, programs for individual genes can be run in parallel, so you can try running make -j 2 and request 2 threads from qsub

- Phyml also supports other models, for example JTT (see manual); you could try to play with those.

- Command touch FILENAME will change the modification time of the given file to the current time

- What happens when you run touch on some of the intermediate files in the analysis in task B? Does Makefile always run properly?